This text discusses the need for web design to be optimized for AI crawlers and Large Language Models (LLMs), not just traditional search engine bots. It stresses that web design must prioritize clean code, high-density information, and explicit data relationships to ensure visibility in AI-generated answers. The text highlights that traditional SEO strategies are no longer sufficient for AI-driven platforms in 2026.

The digital landscape has shifted. We are no longer just designing for human eyes or traditional search engine bots; we are designing for Large Language Models (LLMs) and AI-driven agents. If your site isn't readable by these new "users," your brand simply doesn't exist in the generated answers of 2026. Web design for ai crawlers is the practice of structuring your technical architecture so that AI agents can efficiently ingest, understand, and attribute your content.

To ensure your brand remains visible, you must move beyond old-school SEO. This means prioritizing clean code, high-density information, and explicit data relationships. At Memorable Design, we have seen that the sites winning the AI visibility race are those that treat crawlers as a first-class audience.

Why Traditional SEO Isn't Enough Anymore

For decades, we optimized for keywords and backlinks. While those still matter, AI crawlers like GPT-Bot, ClaudeBot, and Google’s O-1 agents look for "semantic signals." They don't just want to find a keyword; they want to map the relationship between your ideas.

If your website is a tangled mess of heavy scripts and hidden text, an AI agent might skip your site entirely to save on "compute cost." High-quality web design for ai crawlers ensures that your site is the path of least resistance for these models. When an AI can easily parse your data, it is more likely to cite you as a source, boosting your authority in an AI-first world.

1. Simplified DOM Depth and HTML Structure

The Document Object Model (DOM) is the skeletal structure of your webpage. In the past, web designers often nested elements deeply to achieve complex layouts. However, deep nesting creates "noise" for AI agents.

AI crawlers prefer a flat, semantic hierarchy. Using <div> tags for everything makes it harder for an agent to distinguish a header from a footer or a sidebar. By using semantic HTML5 tags like <article>, <section>, and <aside>, you provide a roadmap for the crawler. This is a foundational step in html for ai search.

The Technical Impact of "Code-to-Content" Ratio

AI models are trained on efficiency. If your page has 5,000 lines of code but only 500 words of actual content, the "signal-to-noise" ratio is poor. Streamlining your HTML allows the crawler to extract the "Expertise" and "Experience" (key pillars of the 2026 Core Update) without getting lost in CSS clutter.

2. Managing JavaScript SEO for AI Agents

One of the biggest hurdles in modern web development is how we handle scripts. Javascript seo ai considerations are paramount because many AI crawlers still struggle with "heavy" client-side rendering. If your content only appears after a complex series of JavaScript executions, there is a high probability the AI crawler will see a blank page.

To solve this, we recommend Server-Side Rendering (SSR) or Static Site Generation (SSG). This ensures that the AI crawler receives a fully rendered HTML document the moment it hits your server.

Rendering Comparison Table

| Method | AI Crawlability | User Experience | Implementation Complexity |

| Client-Side (CSR) | Poor - Requires heavy resources | Fast once loaded | Low |

| Server-Side (SSR) | Excellent - Instant data access | Fast initial paint | Medium |

| Static (SSG) | Best - Cleanest HTML structure | Fastest overall | High (for large sites) |

At Memorable Design, we advocate for a "content-first" rendering path. By ensuring your primary text is visible in the initial HTML source, you satisfy the requirements of javascript seo ai and ensure your insights aren't ignored by LLMs.

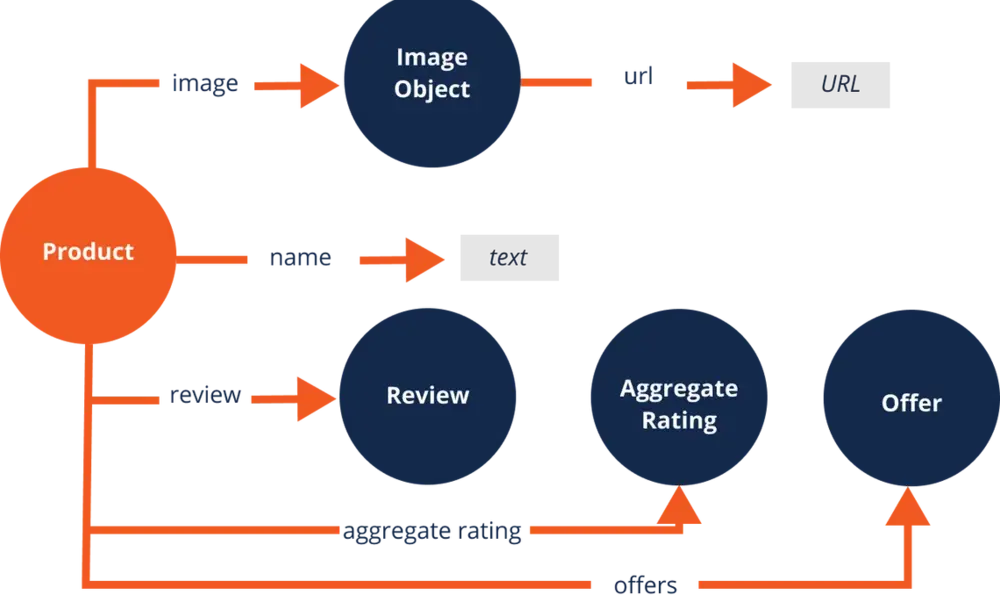

3. Implementing High-Density Schema Markup

If HTML is the skeleton, Schema.org markup is the "brain" of your site’s SEO. AI crawlers love structured data because it removes ambiguity. Instead of the AI guessing that a string of numbers is a price, Schema explicitly tells the bot: "This is the price, and it is in USD."

For web design for ai crawlers, you should go beyond basic "Article" or "Product" schema. In 2026, you need to utilize about, mentions, and citation properties. This helps AI models understand the context of your work and how it relates to the broader web.

- Organization Schema: Clearly define your brand, including your official name, Memorable Design, and social profiles.

- BreadcrumbList: Helps crawlers understand site architecture and hierarchy.

- Author Schema: Crucial for E-E-A-T; it links your content to a real human with verified expertise.

- FAQ Schema: Directly feeds into AI "Answer Boxes" and zero-click search results.

4. The Shift Toward "Text-Centric" Design

Visuals are great for humans, but AI crawlers are text-hungry. A common mistake in modern design is placing critical information inside images or video without proper text alternatives. While AI can "see" images via computer vision, it is much more efficient (and accurate) for them to read text.

In the context of html for ai search, every visual element must have a text-based counterpart. This isn't just about "alt text" for accessibility; it’s about providing a descriptive transcript or a summary of the data presented in an infographic.

Why Contextual Text Density Matters

When an AI bot crawls your page, it looks for "entities." If you are writing about "renewable energy," the bot expects to see related terms like "solar panels," "photovoltaic," and "sustainability." Designing your page to include these semantic clusters in visible text—not just hidden in metadata—improves your ai crawler optimization.

5. Controlling Crawl Budget and Access

Not all crawlers are created equal. Some AI companies may use your data without giving you the "attribution" you deserve. Part of a smart ai crawler optimization strategy is managing your robots.txt file and your Permissions-Policy headers.

You should proactively decide which AI agents are allowed to "train" on your data and which are only allowed to "index" it for search. This distinction is vital for protecting your intellectual property while maintaining visibility.

Strategic robots.txt Management

By explicitly naming agents like GPTBot or CCBot, you can direct them to your most valuable, high-E-E-A-T pages while blocking them from "thin" or utility pages (like checkout or login screens). This ensures the AI spends its "compute budget" on the content that actually builds your brand’s authority.

6. Internal Linking as a Semantic Map

Internal links are the "roads" that crawlers use to navigate your site. For web design for ai crawlers, these links do more than just pass "link juice"; they provide context.

When you link from a post about "Digital Marketing" to a post about "AI Visibility," you are telling the crawler that these two concepts are related. This helps the AI build a Knowledge Graph of your website. At Memorable Design, we suggest using descriptive anchor text that explains the relationship between the two pages, rather than generic phrases like "click here."

Building a Topic Cluster for AI

A topic cluster consists of a "pillar" page (the broad topic) and several "cluster" pages (specific sub-topics). This structure is a dream for ai crawler optimization because it proves you have deep, authoritative knowledge on a subject, which is exactly what the February 2026 Core Update prioritizes.

The Role of E-E-A-T in AI Search Visibility

The February 2026 Google Core Update doubled down on the need for Experience, Expertise, Authoritativeness, and Trustworthiness. AI crawlers are now trained to look for these specific traits.

- Experience: Use first-person accounts and case studies. Mentioning how we at Memorable Design handled a specific technical challenge adds "Experience" that an AI cannot hallucinate.

- Expertise: Use precise technical language. Don't just say "we make sites fast"; say "we optimize the Critical Rendering Path to reduce Time to First Byte."

- Authoritativeness: Cite your sources and maintain a clean backlink profile.

- Trustworthiness: Be transparent. Ensure your "About Us" and "Contact" pages are easily accessible to crawlers to prove you are a legitimate entity.

Summarizing the AI-Ready Web

Building a website today requires a dual-track mind. You must delight the human visitor with a beautiful, fast interface while providing a structured, data-rich environment for AI agents. By focusing on web design for ai crawlers, you are future-proofing your digital presence.

At Memorable Design, we believe that the convergence of javascript seo ai and clean html for ai search is the only way to thrive in the next era of the internet. If an AI can't read you, it can't recommend you.

Conclusion

The evolution of search is moving toward a conversational, agent-driven model. To stay relevant, your technical choices must reflect this reality. By prioritizing web design for ai crawlers, you are not just optimizing for a search engine; you are training the future's most powerful recommendation engines to recognize your brand.

Focus on clean code, prioritize html for ai search, and ensure your javascript seo ai strategy is robust. The brands that win in 2026 will be those that are both human-centric and machine-legible. Let Memorable Design help you bridge that gap and secure your place in the AI knowledge graphs of tomorrow. Remember, effective ai crawler optimization is an ongoing process of refinement—staying updated with the latest core updates is the only way to maintain your edge.

FAQs

What is the most important factor in web design for ai crawlers?

The most important factor is "Machine Readability." This means using semantic HTML, structured data (Schema), and ensuring your content is rendered on the server so AI bots can access the text without executing complex scripts.

How does javascript seo ai differ from traditional SEO?

Traditional SEO focuses on how Googlebot renders JS. Javascript seo ai focuses on a wider range of crawlers, many of which have less "patience" or processing power than Google. Providing a flat HTML version of your site is often the safest bet for AI visibility.

Will AI crawlers hurt my website traffic?

Based on available data, AI agents can lead to "zero-click" searches where the user stays on the AI interface. However, by optimizing for these crawlers, you increase the chance of being cited as the "Source," which drives high-intent, qualified traffic back to your site.

What is ai crawler optimization in simple terms?

It is the process of making your website easy for AI bots to "digest." Think of it as providing a clear, bulleted summary of your business that an AI can quickly memorize and repeat to others.

Does Memorable Design offer AI visibility audits?

Yes. At Memorable Design, we focus on technical audits that specifically check for DOM depth, schema accuracy, and script-load efficiency to ensure your brand is ready for the AI-first search landscape.